Crash Dump Analysis Patterns (Part 39)

Friday, November 23rd, 2007As mentioned in Early Crash Dump pattern saving crash dumps on first-chance exceptions helps to diagnose components that might have caused corruption and later crashes, hangs or CPU spikes by ignoring abnormal exceptions like access violation. In such cases we need to know whether an application installs its own Custom Exception Handler or several of them. If it uses only default handlers provided by runtime or windows subsystem then most likely a first-chance access violation exception will result in a last-chance exception and a postmortem dump. To check a chain of exception handlers we can use WinDbg !exchain extention command. For example:

0:000> !exchain

0017f9d8: TestDefaultDebugger!AfxWinMain+3f5 (00420aa9)

0017fa60: TestDefaultDebugger!AfxWinMain+34c (00420a00)

0017fb20: user32!_except_handler4+0 (770780eb)

0017fcc0: user32!_except_handler4+0 (770780eb)

0017fd24: user32!_except_handler4+0 (770780eb)

0017fe40: TestDefaultDebugger!AfxWinMain+16e (00420822)

0017feec: TestDefaultDebugger!AfxWinMain+797 (00420e4b)

0017ff90: TestDefaultDebugger!_except_handler4+0 (00410e00)

0017ffdc: ntdll!_except_handler4+0 (77961c78)

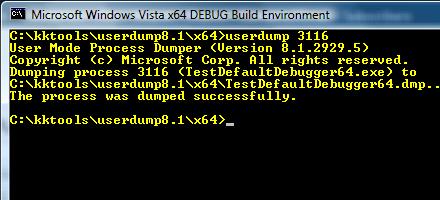

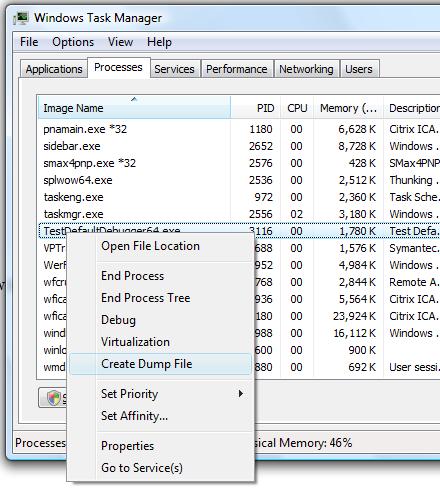

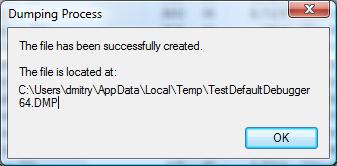

We see that TestDefaultDebugger doesn’t have its own exception handlers except ones provided by MFC and C/C++ runtime libraries which were linked statically. Here is another example. It was reported that a 3rd-party application was hanging and spiking CPU (Spiking Thread pattern) so a user dump was saved using command line userdump.exe:

0:000> vertarget

Windows Server 2003 Version 3790 (Service Pack 2) MP (4 procs) Free x86 compatible

Product: Server, suite: TerminalServer

kernel32.dll version: 5.2.3790.4062 (srv03_sp2_gdr.070417-0203)

Debug session time: Thu Nov 22 12:45:59.000 2007 (GMT+0)

System Uptime: 0 days 10:43:07.667

Process Uptime: 0 days 4:51:32.000

Kernel time: 0 days 0:08:04.000

User time: 0 days 0:23:09.000

0:000> !runaway 3

User Mode Time

Thread Time

0:1c1c 0 days 0:08:04.218

1:2e04 0 days 0:00:00.015

Kernel Mode Time

Thread Time

0:1c1c 0 days 0:23:09.156

1:2e04 0 days 0:00:00.031

0:000> kL

ChildEBP RetAddr

0012fb80 7739bf53 ntdll!KiFastSystemCallRet

0012fbb4 05ca73b0 user32!NtUserWaitMessage+0xc

WARNING: Stack unwind information not available. Following frames may be wrong.

0012fd20 05c8be3f 3rdPartyDLL+0x573b0

0012fd50 05c9e9ea 3rdPartyDLL+0x3be3f

0012fd68 7739b6e3 3rdPartyDLL+0x4e9ea

0012fd94 7739b874 user32!InternalCallWinProc+0x28

0012fe0c 7739c8b8 user32!UserCallWinProcCheckWow+0x151

0012fe68 7739c9c6 user32!DispatchClientMessage+0xd9

0012fe90 7c828536 user32!__fnDWORD+0x24

0012febc 7739d1ec ntdll!KiUserCallbackDispatcher+0x2e

0012fef8 7738cee9 user32!NtUserMessageCall+0xc

0012ff18 0050aea9 user32!SendMessageA+0x7f

0012ff70 00452ae4 3rdPartyApp+0x10aea9

0012ffac 00511941 3rdPartyApp+0x52ae4

0012ffc0 77e6f23b 3rdPartyApp+0x111941

0012fff0 00000000 kernel32!BaseProcessStart+0x23

Exception chain showed custom exception handlers:

0:000> !exchain

0012fb8c: 3rdPartyDLL+57acb (05ca7acb)

0012fd28: 3rdPartyDLL+3be57 (05c8be57)

0012fd34: 3rdPartyDLL+3be68 (05c8be68)

0012fdfc: user32!_except_handler3+0 (773aaf18)

CRT scope 0, func: user32!UserCallWinProcCheckWow+156 (773ba9ad)

0012fe58: user32!_except_handler3+0 (773aaf18)

0012fea0: ntdll!KiUserCallbackExceptionHandler+0 (7c8284e8)

0012ff3c: 3rdPartyApp+53310 (00453310)

0012ff48: 3rdPartyApp+5334b (0045334b)

0012ff9c: 3rdPartyApp+52d06 (00452d06)

0012ffb4: 3rdPartyApp+38d4 (004038d4)

0012ffe0: kernel32!_except_handler3+0 (77e61a60)

CRT scope 0, filter: kernel32!BaseProcessStart+29 (77e76a10)

func: kernel32!BaseProcessStart+3a (77e81469)

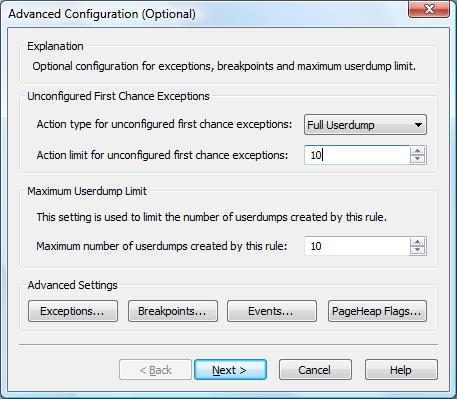

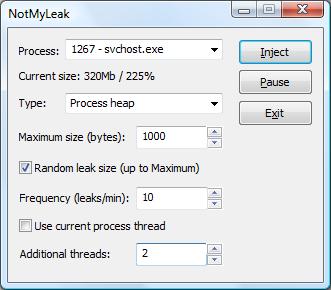

The customer then enabled MS Exception Monitor and selected only Access violation exception code (c0000005) to avoid False Positive Dumps. During application execution various 1st-chance exception crash dumps were saved pointing to numerous access violations including function calls into unloaded modules, for example:

0:000> kL 100

ChildEBP RetAddr

WARNING: Frame IP not in any known module. Following frames may be wrong.

0012f910 7739b6e3 <Unloaded_Another3rdParty.dll>+0x4ce58

0012f93c 7739b874 user32!InternalCallWinProc+0x28

0012f9b4 7739c8b8 user32!UserCallWinProcCheckWow+0x151

0012fa10 7739c9c6 user32!DispatchClientMessage+0xd9

0012fa38 7c828536 user32!__fnDWORD+0x24

0012fa64 7739d1ec ntdll!KiUserCallbackDispatcher+0x2e

0012faa0 7738cee9 user32!NtUserMessageCall+0xc

0012fac0 0a0f2e01 user32!SendMessageA+0x7f

0012fae4 0a0f2ac7 3rdPartyDLL+0x52e01

0012fb60 7c81a352 3rdPartyDLL+0x52ac7

0012fb80 7c839dee ntdll!LdrpCallInitRoutine+0x14

0012fc94 77e6b1bb ntdll!LdrUnloadDll+0x41a

0012fca8 0050c9c1 kernel32!FreeLibrary+0x41

0012fdf4 004374af 3rdPartyApp+0x10c9c1

0012fe24 0044a076 3rdPartyApp+0x374af

0012fe3c 7739b6e3 3rdPartyApp+0x4a076

0012fe68 7739b874 user32!InternalCallWinProc+0x28

0012fee0 7739ba92 user32!UserCallWinProcCheckWow+0x151

0012ff48 773a16e5 user32!DispatchMessageWorker+0x327

0012ff58 00452aa0 user32!DispatchMessageA+0xf

0012ffac 00511941 3rdPartyApp+0x52aa0

0012ffc0 77e6f23b 3rdPartyApp+0x111941

0012fff0 00000000 kernel32!BaseProcessStart+0x23

- Dmitry Vostokov @ DumpAnalysis.org -