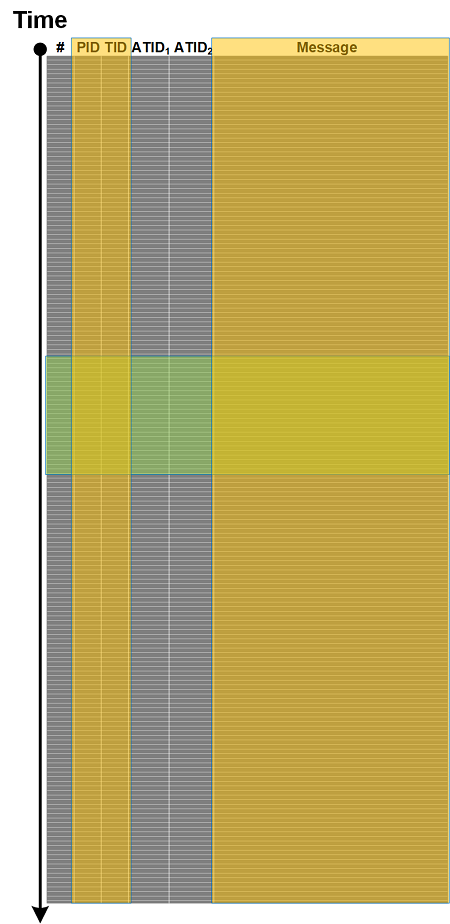

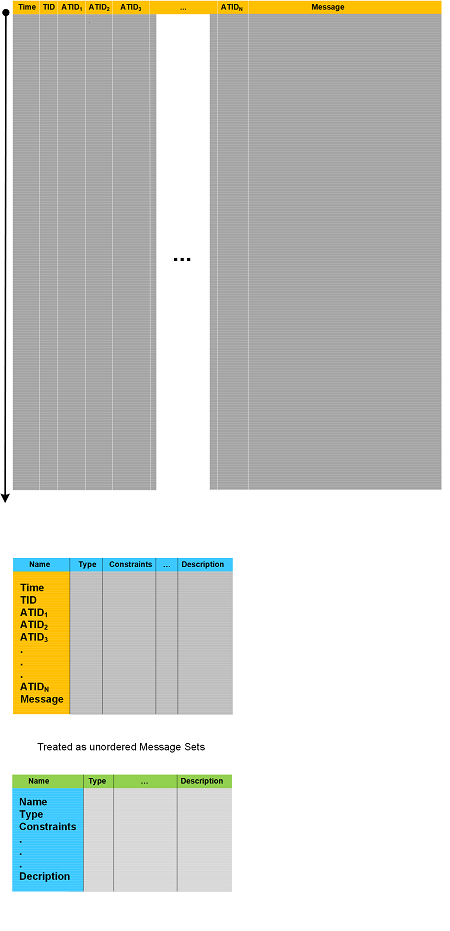

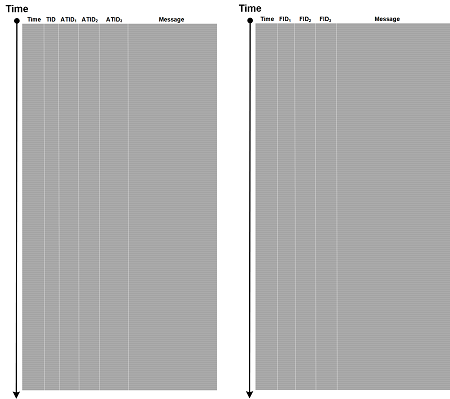

Manual analysis of Execution Residue in stack regions can be quite daunting so some basic statistics on the distribution of address, value, and symbolic information can be useful. To automate this process we use Pandas python library and interpret preprocessed WinDbg output of dps and dpp commands as DataFrame object:

import pandas as pd

import pandas_profiling

df = pd.read_csv("stack.csv")

html_file = open("stack.html", "w")

html_file.write (pandas_profiling.ProfileReport(df).to_html())

html_file.close()

We get a rudimentary profile report: stack.html for stack.csv file. The same was also done for Address, Address/Value, Value, Symbol output of dpp command: stack4columns.html for stack4columns.csv file.

We call this analysis pattern Region Profile since any Memory Region can be used. This analysis pattern is not limited to Python/Pandas and different libraries/scripts/script languages can also be used for statistical and correlational profiling. We plan to provide more examples here in the future.

- Dmitry Vostokov @ DumpAnalysis.org + TraceAnalysis.org -