Crash Dump Analysis Patterns (Part 227)

Sunday, May 17th, 2015Managed code Nested Exceptions give us process virtual space bound stack traces. However, exception objects may be marshaled across processes and even computers. The remote stack trace return addresses don’t have the same validity in different process contexts. Fortunately, there is _remoteStackTraceString field in exception objects that contains the original stack trace. Default analysis command sometimes uses it:

0:013> !analyze -v

[...]

EXCEPTION_OBJECT: !pe 25203b0

Exception object: 00000000025203b0

Exception type: System.Reflection.TargetInvocationException

Message: Exception has been thrown by the target of an invocation.

InnerException: System.Management.Instrumentation.WmiProviderInstallationException, Use !PrintException 0000000002522cf0 to see more.

StackTrace (generated):

SP IP Function

000000001D39E720 0000000000000001 Component!Proxy.Start()+0x20

000000001D39E720 000007FEF503D0B6 mscorlib_ni!System.Threading.ExecutionContext.RunInternal(System.Threading.ExecutionContext, System.Threading.ContextCallback, System.Object, Boolean)+0x286

000000001D39E880 000007FEF503CE1A mscorlib_ni!System.Threading.ExecutionContext.Run(System.Threading.ExecutionContext, System.Threading.ContextCallback, System.Object, Boolean)+0xa

000000001D39E8B0 000007FEF503CDD8 mscorlib_ni!System.Threading.ExecutionContext.Run(System.Threading.ExecutionContext, System.Threading.ContextCallback, System.Object)+0x58

000000001D39E900 000007FEF4FB0302 mscorlib_ni!System.Threading.ThreadHelper.ThreadStart()+0x52

[...]

MANAGED_STACK_COMMAND: ** Check field _remoteStackTraceString **;!do 2522cf0;!do 2521900

[...]

0:013> !DumpObj 2522cf0

[...]

000007fef51b77f0 4000054 2c System.String 0 instance 2521900 _remoteStackTraceString

[…]

0:013> !DumpObj 2521900

Name: System.String

[…]

String: at System.Management.Instrumentation.InstrumentationManager.RegisterType(Type managementType)

at Component.Provider..ctor()

at Component.Start()

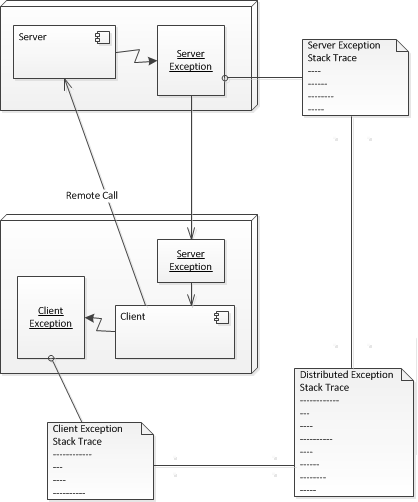

Checking this field may also be necessary for exceptions of interest from managed space Execution Residue. We call this pattern Distributed Exception. The basic idea is illustrated in the following diagram using the borrowed UML notation (not limited to just two computers):

- Dmitry Vostokov @ DumpAnalysis.org + TraceAnalysis.org -