Archive for the ‘Software Trace Analysis’ Category

Sunday, October 14th, 2018

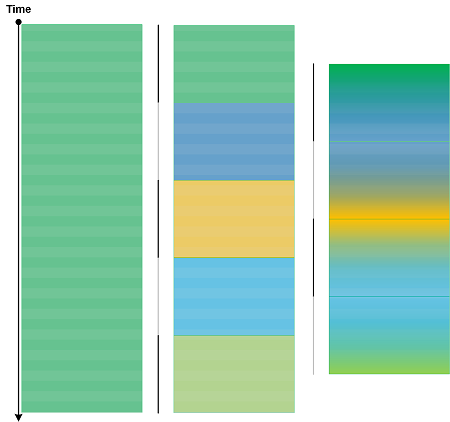

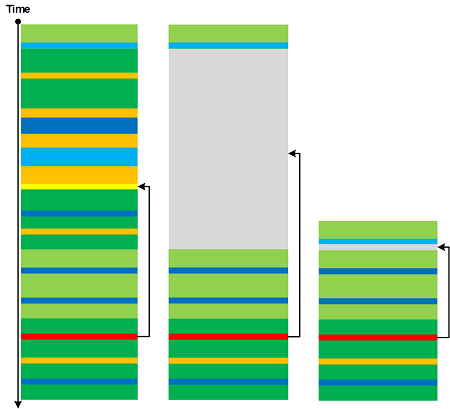

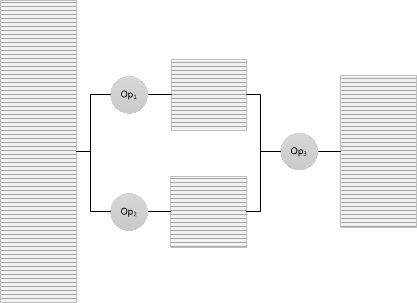

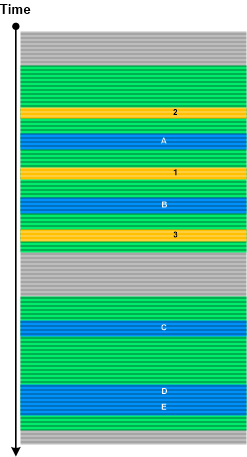

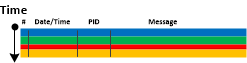

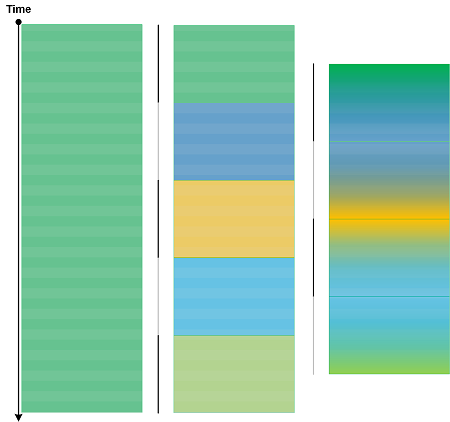

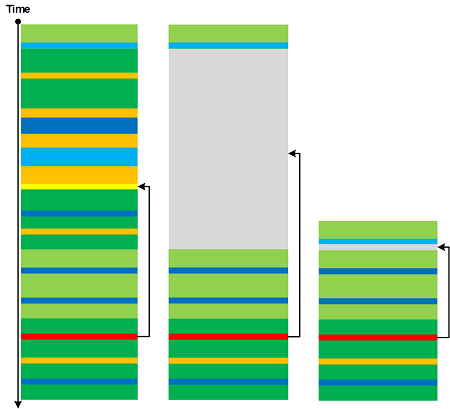

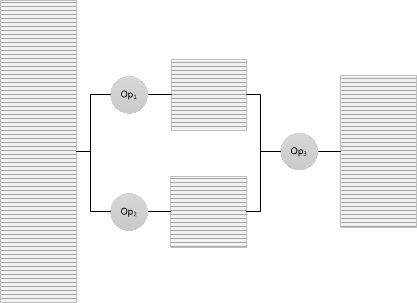

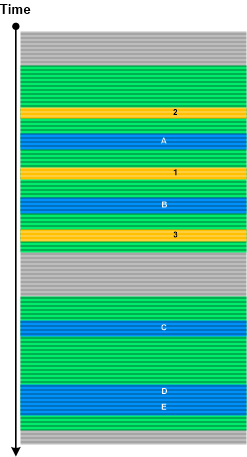

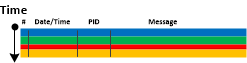

When we have very large traces (including Split Traces) we can use the concept of sharding to split a log into several shards for parallel processing. However, some patterns may require the analysis across the boundary of shards. Trace Sharding is illustrated in the following diagram:

- Dmitry Vostokov @ DumpAnalysis.org + TraceAnalysis.org -

Posted in Log Analysis, Software Trace Analysis, Trace Analysis Patterns | No Comments »

Saturday, October 13th, 2018

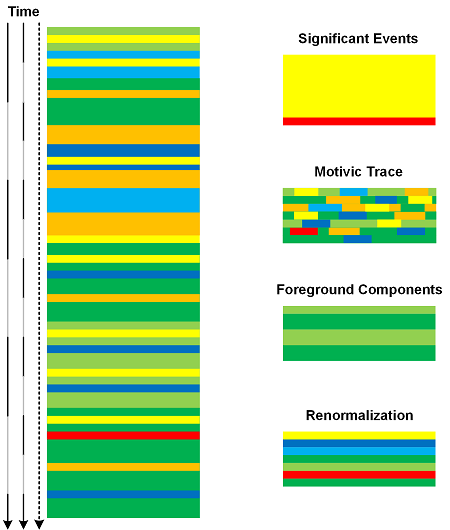

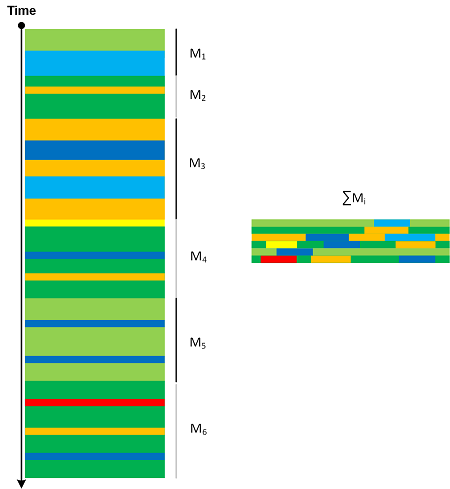

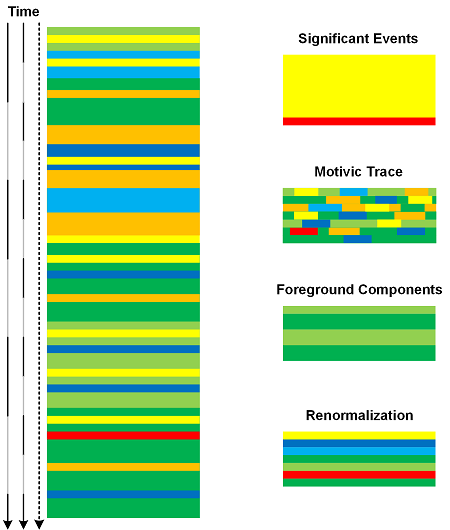

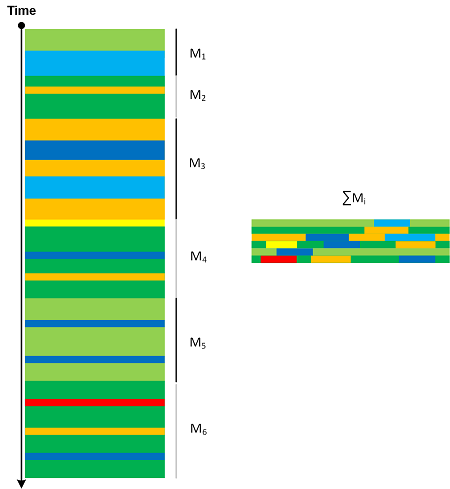

A software trace or log can be analyzed using different Time Scales. The coarser the scale the more messages are included in time intervals. Such per interval Message Sets can be analyzed and transformed into one message using analysis patterns such as Significant Event, Motivic Trace, Background and Foreground Components, and Renormalization. The resulted new trace will be a scaled version of the original trace as depicted in the following diagram:

- Dmitry Vostokov @ DumpAnalysis.org + TraceAnalysis.org -

Posted in Log Analysis, Software Trace Analysis, Trace Analysis Patterns | No Comments »

Sunday, October 7th, 2018

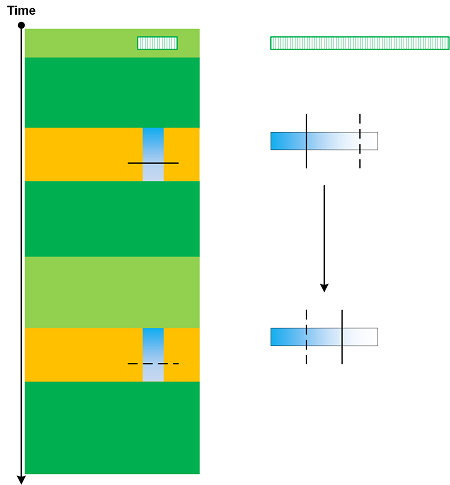

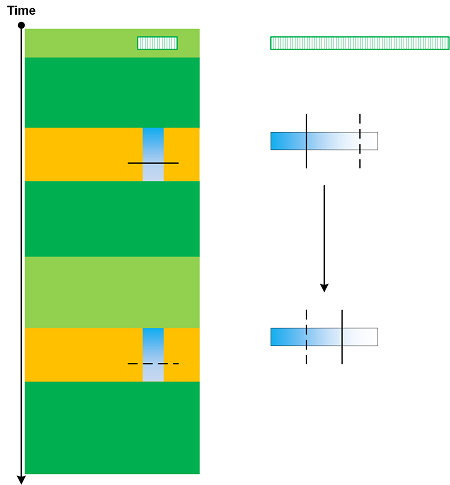

The data in individual messages and in State Dump message blocks may be truncated. This is similar to Visibility Limit at the log message level. When data values are sorted and resorted this may result in “hidden” data replacing the previously “visible” data and vice versa as shown in the following diagram:

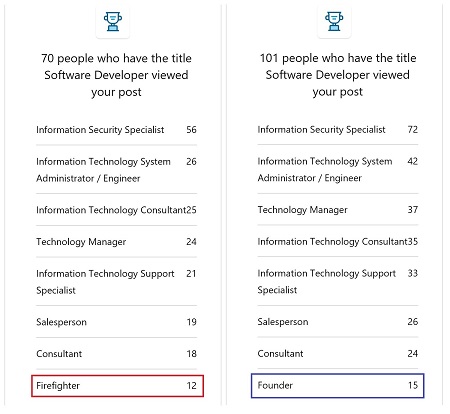

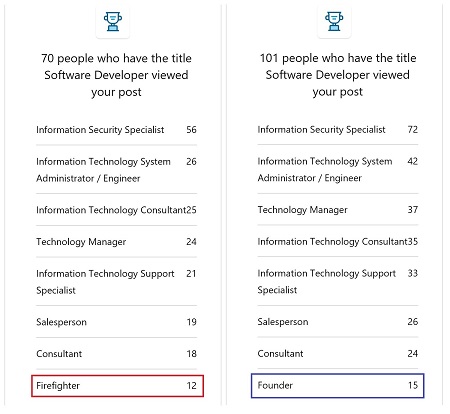

This pattern (Truncated Data) was conceived after we observed the change of data key in sequence of a LinkedIn post (not related to firefighting) stats sorted by value and thought that was “strange”:

However, stats from the other post showed the both keys were valid:

- Dmitry Vostokov @ DumpAnalysis.org + TraceAnalysis.org -

Posted in Log Analysis, Software Trace Analysis, Trace Analysis Patterns | No Comments »

Saturday, September 29th, 2018

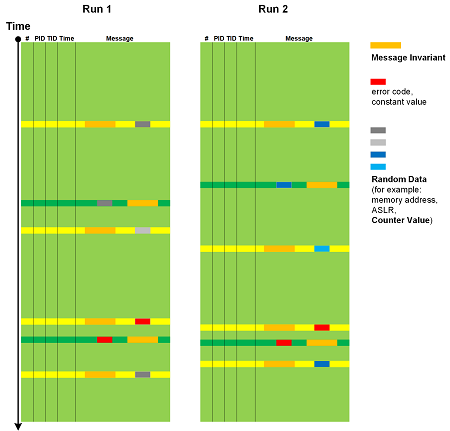

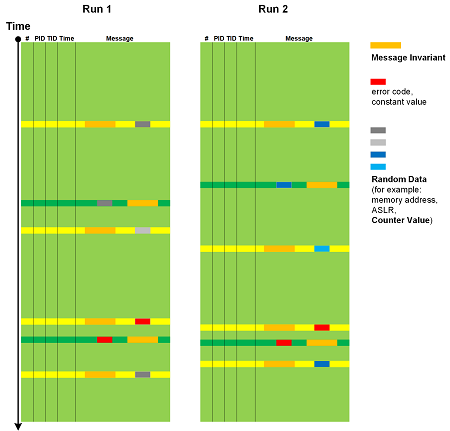

Trace and log message text usually consists of constant unchanging Message Invariants and some varying data. The latter can be classified into Random Data such as memory addresses, especially when ASLR is enabled, Counter Values, and variable data but constant in nature, such as error values and NULL pointer. Individual values from Signals are not considered random but their sequence can be. This analysis pattern is depicted in the following diagram (adopted from Data Association analysis pattern):

- Dmitry Vostokov @ DumpAnalysis.org + TraceAnalysis.org -

Posted in Log Analysis, Software Trace Analysis, Trace Analysis Patterns | No Comments »

Saturday, September 22nd, 2018

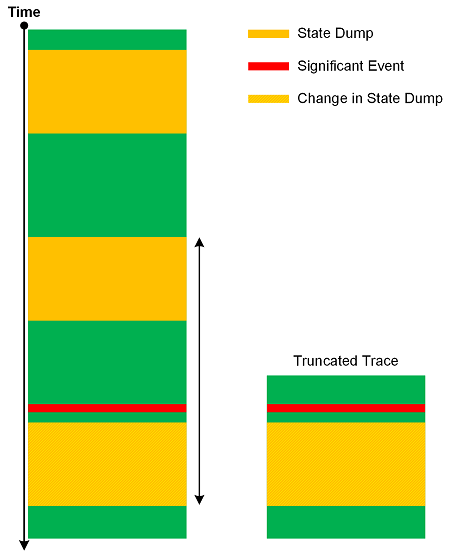

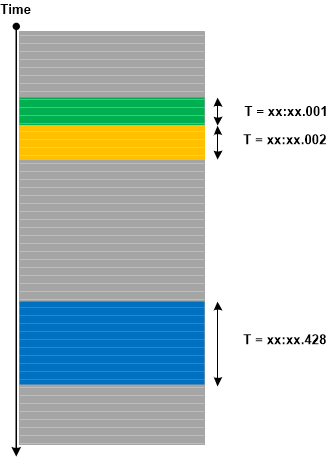

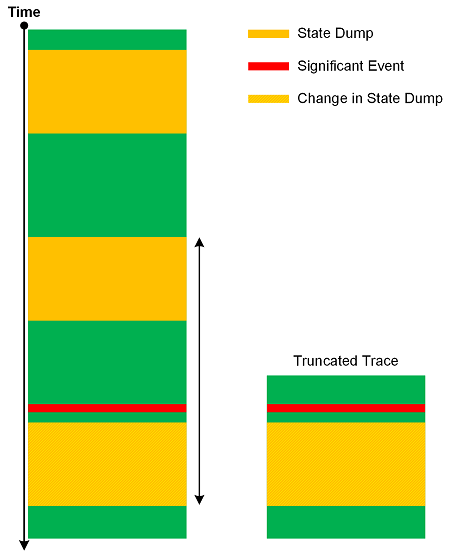

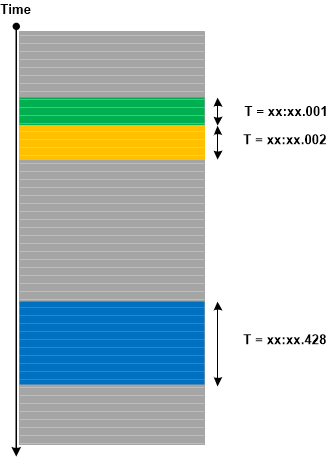

Sometimes, we ask for a log file to see State and Event pattern, and see it there, only to find that we cannot do Back Trace of State Dumps from some Significant Event for Inter-Correlation analysis because our Data Interval is truncated (Truncated Trace). This highlights the importance of proper tracing intervals that we call Significant Interval analysis pattern by analogy with significant digits in scientific measurements. The following diagram illustrate the pattern:

If you find out you get truncated traces and logs often you may want to increase Statement Current for state logging.

- Dmitry Vostokov @ DumpAnalysis.org + TraceAnalysis.org -

Posted in Log Analysis, Mathematics of Debugging, Software Trace Analysis, Software Trace Analysis Tips and Tricks, Software Tracing Design, Software Tracing Implementation Patterns, Trace Analysis Patterns | No Comments »

Saturday, September 8th, 2018

We can “integrate” trace message stream into another, smaller trace. By analogy with motivic integration in contemporary mathematics we call this analysis pattern Motivic Trace. There can be border cases where the whole trace is reduced to one message or every message is associated with a different message (perhaps shorter or a number). Message Sets that are integrated into Motivic Trace can be completely different (for example, based on Motives) in comparison with Quotient Trace where we reduce Message Sets that have the same common attribute.

- Dmitry Vostokov @ DumpAnalysis.org + TraceAnalysis.org -

Posted in Log Analysis, Mathematics of Debugging, Software Trace Analysis, Trace Analysis Patterns | 1 Comment »

Thursday, June 28th, 2018

Using the metaphor of renormalization from physics we introduce Renormalization trace and log analysis pattern where a selected message and its Message Context are replaced by a single message:

- Dmitry Vostokov @ DumpAnalysis.org + TraceAnalysis.org -

Posted in Log Analysis, Software Trace Analysis, Trace Analysis Patterns, Trace Analysis and Physics | 1 Comment »

Sunday, May 13th, 2018

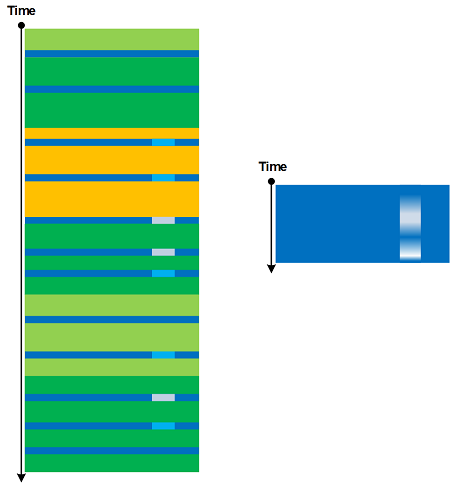

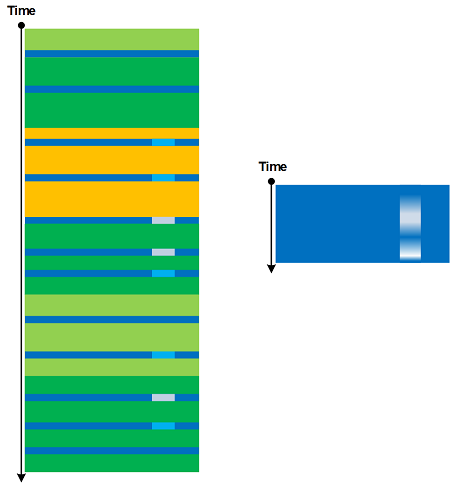

According to the definition in “Topological Signal Processing” by Michael Robinson (ISBN: 978-3662522844) “a signal consists of a collection of related measurements” (p. 5). For traces and logs we can apply the similar definition and consider Signal as a collection of local messages having the same Message Invariant and related variable data values. Signals are examples of Message Sets. The typical example are sets of related Counter Value messages. Signals can be obtained by obtaining Adjoint Thread of Activity of a specific message (to filter out Background Components “noise”) as illustrated in the following diagram:

Generally, the variable “measurement” part can form Braid of Activity.

We introduce Signal analysis pattern to bridge the gap between Software Narratology and Hardware Narratology.

- Dmitry Vostokov @ DumpAnalysis.org + TraceAnalysis.org -

Posted in Log Analysis, Software Narratology, Software Trace Analysis, Trace Analysis Patterns | No Comments »

Thursday, January 18th, 2018

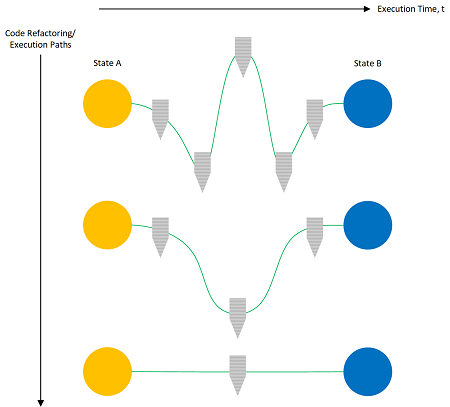

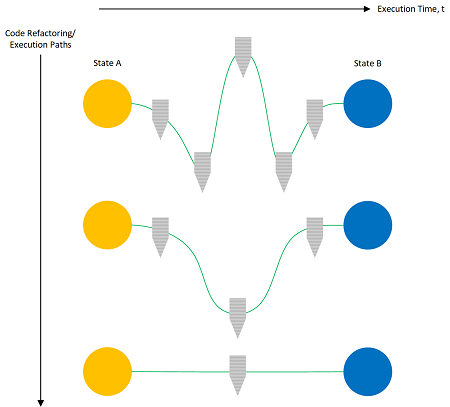

We have analysis patterns that compare changes in software traces and logs during different executions (Master Trace) and during the evolution of software itself (Meta Trace). Such patterns are general enough, and often we are interested in their restriction to different execution paths or changes in code that leave start and end software states invariant:

We call such analysis pattern Trace Homotopy by analogy with homotopy in mathematics where a curve or sequence of operations can vary with constant endpoints.

- Dmitry Vostokov @ DumpAnalysis.org + TraceAnalysis.org -

Posted in Log Analysis, Software Trace Analysis, Trace Analysis Patterns | No Comments »

Sunday, November 26th, 2017

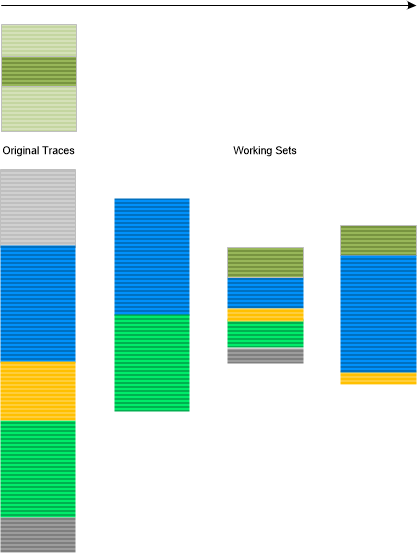

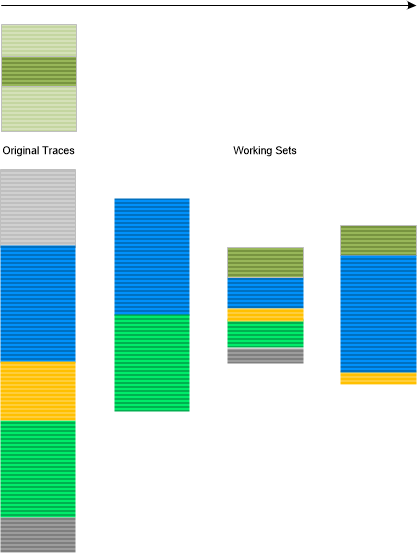

When we analyze traces and logs we work with only a small subset of log messages. We call any such current subset Working Set by analogy with working sets in operating system memory paging implementations:

This analysis pattern can also be reconciled with an operadic approach to trace and log analysis by chaining appropriate diagnostic operads from the original traces to the desired working sets:

- Dmitry Vostokov @ DumpAnalysis.org + TraceAnalysis.org -

Posted in Log Analysis, Software Trace Analysis, Trace Analysis Patterns | No Comments »

Wednesday, October 4th, 2017

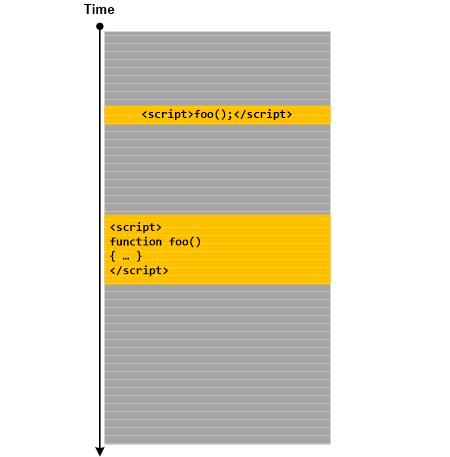

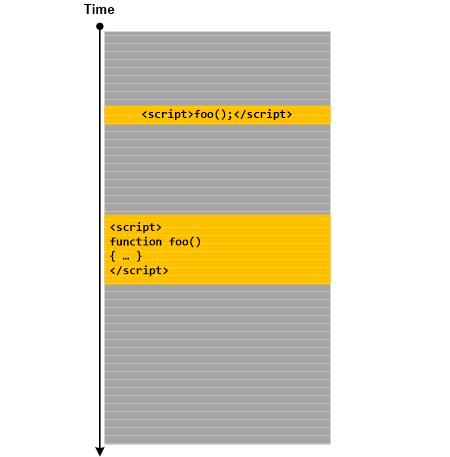

Messages that contain scripting statements can be signs of malnarratives that resulted from log injection during attempts to exploit possible cross channel scripting (XCS) and cross-site scripting (XSS) vulnerabilities. Such Script Messages may be spread across a log as illustrated in the following diagram:

- Dmitry Vostokov @ DumpAnalysis.org + TraceAnalysis.org -

Posted in Log Analysis, Malnarratives, Software Trace Analysis, Trace Analysis Patterns | No Comments »

Sunday, August 6th, 2017

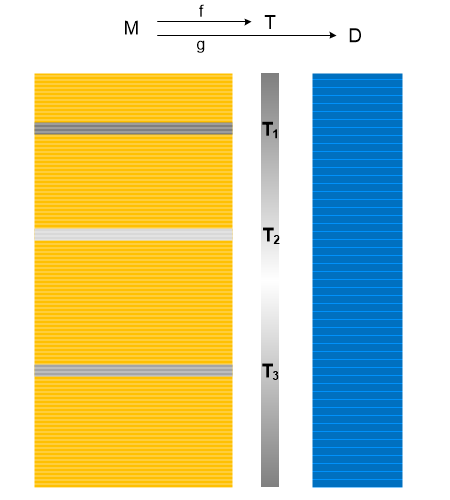

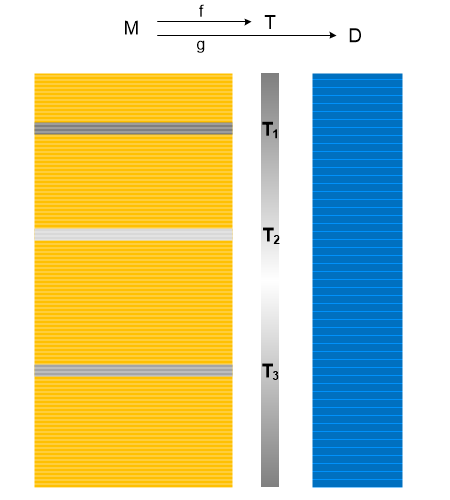

We can associate a function with a domain of trace messages (M) to some other range either continuous (T) or discreet (D). We call this analysis pattern Trace Field by analogy with fields in physics:

Or, in general, Trace Field, is a functor between the domain of the category of trace messages (M) and a codomain of some other category, not necessarily numerical. Typical examples include Trace Presheaves and Fiber Bundles. Another example, for generalized logs, is Memory Fibration taken to extreme.

- Dmitry Vostokov @ DumpAnalysis.org + TraceAnalysis.org -

Posted in Log Analysis, Software Trace Analysis, Trace Analysis Patterns | No Comments »

Wednesday, August 2nd, 2017

Sometimes we have variable sequences of Significant Events when we expect the certain constant number of such events in repeated normal and problem cases. For example, in repeated normal cases we expect more than 10 of events but in repeated abnormal cases - just 2 events. The latter cases would indicate that something happened inside the processing of the second event. But if one or more such cases contain 3 or 4 events that would point to some external influence that aborted the sequence of events. We call such variable event sequences Hedges by analogy with hedge variables or variadic variables. One of the recent example that we encountered involved multiple abnormal cases with just 2 events. We were about to investigate the internals of the second event but noticed that one of the cases contained 3 events. Further analysis indicated that the whole sequence was aborted by some external process after reaching the certain timeout. In the case of 3 events the first 2 happened too earlier and that allowed for the 3rd event to happen before the timeout was triggered:

- Dmitry Vostokov @ DumpAnalysis.org + TraceAnalysis.org -

Posted in Log Analysis, Software Trace Analysis, Trace Analysis Patterns | No Comments »

Thursday, July 20th, 2017

Certain types of blind SQL injection attacks may leave log messages with just one byte difference. We call with analysis pattern Ultrasimilar Messages by analogy with an ultrametric space in mathematics and the interpretation of messages as p-adic numbers. Since, such messages may be scattered in a log we can choose Message Pattern based on some Message Invariant (for example, parts of SQL request) and then analyze its Fiber of Activity (for example, Data Flow of its variable part). A log with two different types of Ultrasimilar Messages is shown in the following diagram:

- Dmitry Vostokov @ DumpAnalysis.org + TraceAnalysis.org -

Posted in Log Analysis, Mathematics of Debugging, Security, Software Trace Analysis, Trace Analysis Patterns | No Comments »

Monday, May 22nd, 2017

Sometimes we are interested in Message Set that has the same time attribute value (or rounded to some digit). We call this analysis pattern Activity Packet by analogy with wave packets. It may allow identification of related threads and activities.

It is different from Activity Quantum analysis pattern where time attribute value may change for continuous Message Set with the same thread id.

- Dmitry Vostokov @ DumpAnalysis.org + TraceAnalysis.org -

Posted in Log Analysis, Software Trace Analysis, Trace Analysis Patterns | No Comments »

Sunday, May 21st, 2017

Some Stack Traces reveal a functional purpose, for example, painting, querying a database, doing HTML or JavaScript processing, loading a file, printing, or something else. Such traces from various Stack Trace Collections (unmanaged, managed, predicate, CPUs) may be compared for similarity and help with analysis patterns, such as examples in Wait Chain (C++11 condition variable, SRW lock), finding semantically Coupled Processes, and many others where we look at the meaning of stack trace frame sequences to relate them to each other. We call this analysis pattern Stack Trace Motif by analogy with Motif trace and log analysis pattern. Longer stack traces may contain several Motives and also Technology-Specific Subtraces (for example, COM interface invocation frames).

- Dmitry Vostokov @ DumpAnalysis.org + TraceAnalysis.org -

Posted in Core Dump Analysis, Crash Dump Analysis, Crash Dump Patterns, Software Trace Analysis, Software Trace Analysis Tips and Tricks, Trace Analysis Patterns | No Comments »

Thursday, May 18th, 2017

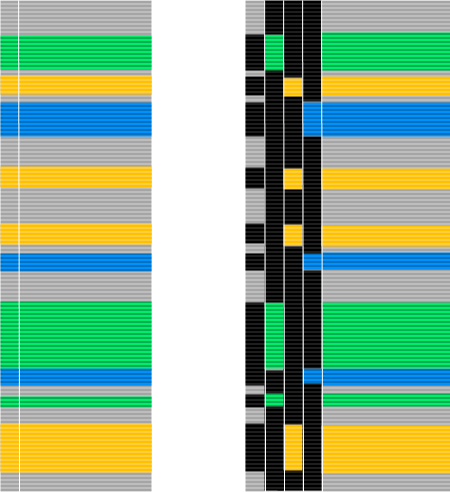

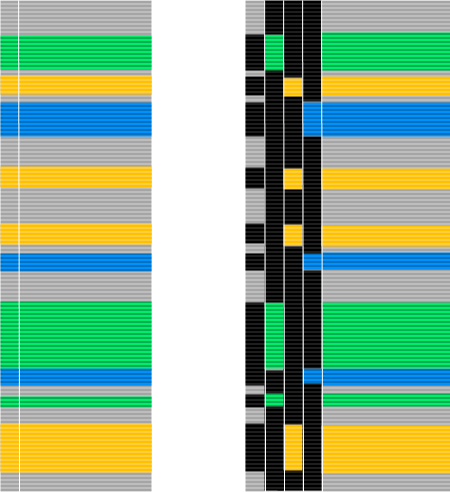

A typical software trace or log (for example, from Process Monitor) lists messages from several processes and threads sequentially. However, such columns may be split into individual process ID or thread ID columns. The same can be done for any Adjoint Thread and illustrated in the following diagram:

We call this analysis pattern Combed Trace by analogy with multibraiding.

- Dmitry Vostokov @ DumpAnalysis.org + TraceAnalysis.org -

Posted in Log Analysis, Software Trace Analysis, Software Trace Visualization, Trace Analysis Patterns | No Comments »

Wednesday, May 3rd, 2017

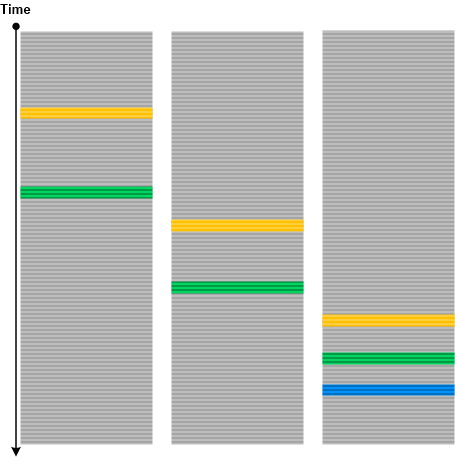

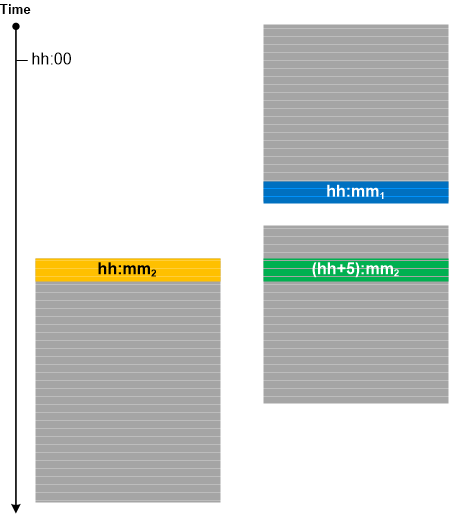

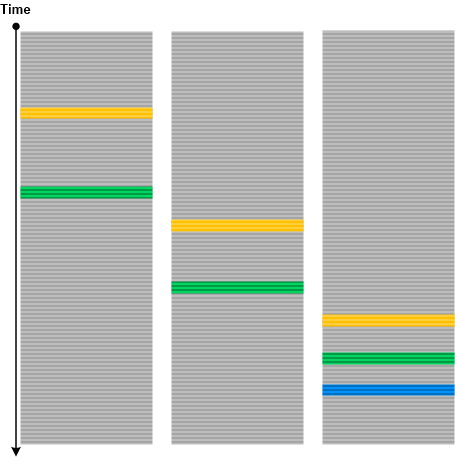

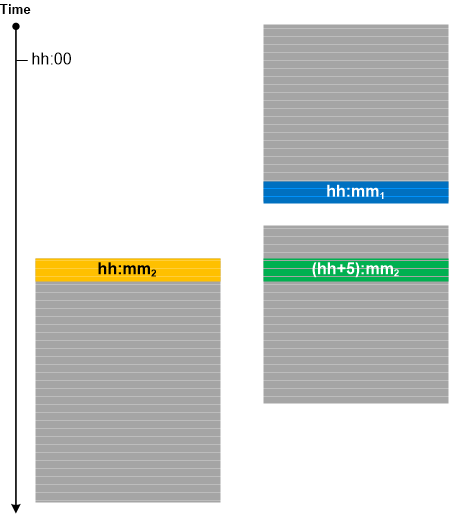

Often, for Inter-Correlational trace and log analysis, we need to make sure that we have synchronized traces. The one version of Unsynchronized Traces analysis pattern is depicted in the following diagram where one trace ends (possibly Truncated Trace) before the start of another trace and both were traced within one hour:

If tracing was done in different time zones with different local times specified in logs we can determine whether the traces are synchronized (when time zone information is not available in Basic Facts) by looking at minutes as shown for the third trace in the diagram above. This technique can also be used in trace calibration (see Calibrating Trace).

There is a similar analysis pattern for memory analysis called Unsynchronized Dumps.

- Dmitry Vostokov @ DumpAnalysis.org + TraceAnalysis.org -

Posted in CDF Analysis Tips and Tricks, Core Dump Analysis, Crash Dump Analysis, Crash Dump Patterns, Log Analysis, Software Trace Analysis, Software Trace Analysis Tips and Tricks, Software Trace Reading, Trace Analysis Patterns | No Comments »

Saturday, April 29th, 2017

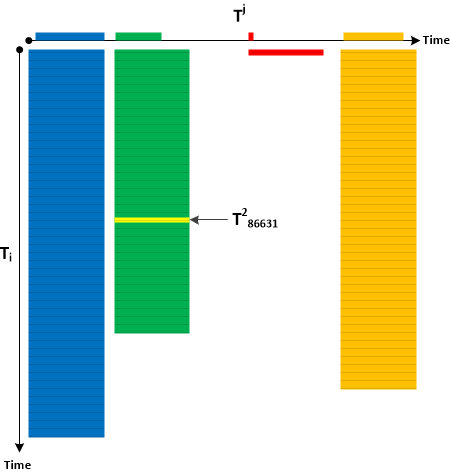

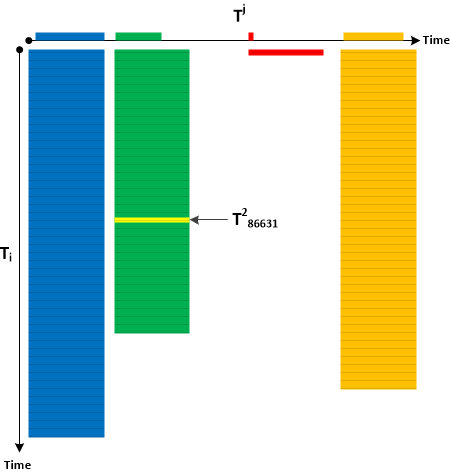

The idea of Tensor Trace analysis pattern initially appeared in the context of memory dumps as general traces with several special traces inside but then developed further when working on Singleton Trace analysis pattern when we realized that several Singleton Traces may form a new separate log:

Therefore, we may combine several traces and logs into one global trace where each message references separate local traces and logs:

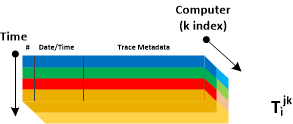

Typical example is a repeated tracing. Each trace has an i-th index spanning the number of trace messages. We say it is has Ti components. Each individual logging has an j-th index and overall global log has Tj components. Together the form the second rank tensor:

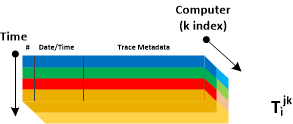

There can be Tensor Traces of the higher rank, for example, the 3rd component spanning computers:

This analysis pattern is different from Meta Trace where the latter is about trace evolution during software development. It is also different from Trace Dimension which is about one trace (Tensor Trace of rank 1).

- Dmitry Vostokov @ DumpAnalysis.org + TraceAnalysis.org -

Posted in Global Trace Analysis, Log Analysis, Software Trace Analysis, Trace Analysis Patterns | No Comments »

Saturday, April 29th, 2017

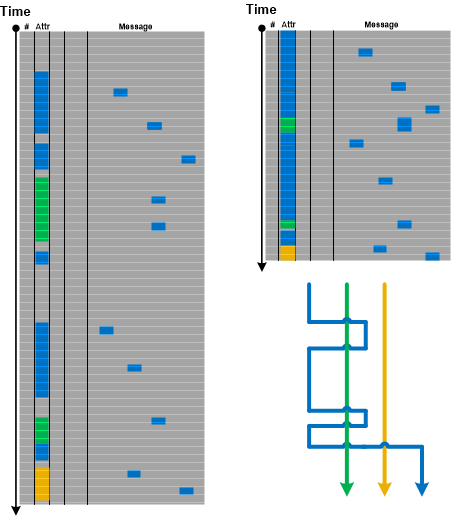

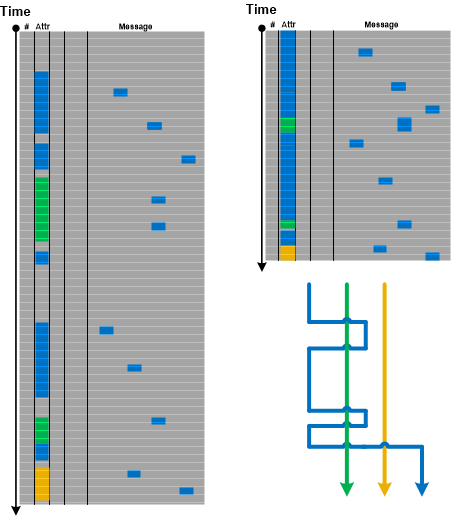

If we consider a log as a text, ignore its column structure, and do search for the particular attribute value (for example, PID) we get Message Set consisting of messages having that attribute value as column (Adjoint Thread of Activity) and messages having that attribute value referenced in their message text. We call this pattern Braid of Activity because metaphorically it looks like Adjoint Threads of Activity cross each other (like multibraiding):

- Dmitry Vostokov @ DumpAnalysis.org + TraceAnalysis.org -

Posted in Log Analysis, Software Trace Analysis, Software Trace Reading, Trace Analysis Patterns | No Comments »