We first introduced Critical Region pattern in Accelerated Mac OS X Core Dump Analysis training but didn’t submit the pattern itself to the catalog at that time.

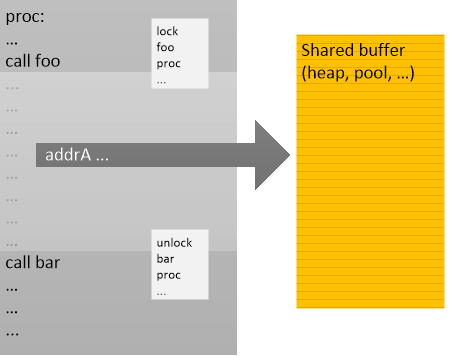

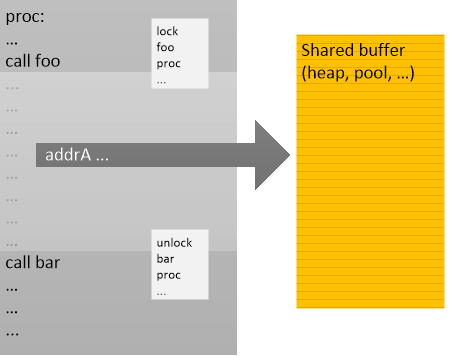

A critical region is usually a region of code protected by synchronization objects such as critical sections and mutexes. However, Critical Region analysis pattern is about identifying code regions “sandwiched” between contending function calls (which may or may not involve synchronization objects and corresponding synchronization calls such as identified in Contention patterns), and then identifying any possible shared data referenced by such code regions:

(gdb) thread apply all bt

Thread 6 (Thread 0x7f2665377700 (LWP 17000)):

#0 0x00000000004151a1 in _int_malloc ()

#1 0x0000000000416cf8 in malloc ()

#2 0x00000000004005a4 in proc ()

#3 0x0000000000400604 in bar_two ()

#4 0x0000000000400614 in foo_two ()

#5 0x000000000040062c in thread_two ()

#6 0x00000000004016c0 in start_thread (arg=<optimized out>)

at pthread_create.c:304

#7 0x0000000000432589 in clone ()

#8 0x0000000000000000 in ?? ()

Thread 5 (Thread 0x7f2664b76700 (LWP 17001)):

#0 __lll_unlock_wake_private ()

at ../nptl/sysdeps/unix/sysv/linux/x86_64/lowlevellock.S:343

#1 0×000000000041886d in _L_unlock_9670 ()

#2 0×0000000000416d22 in malloc ()

#3 0×00000000004005a4 in proc ()

#4 0×0000000000400641 in bar_three ()

#5 0×0000000000400651 in foo_three ()

#6 0×0000000000400669 in thread_three ()

#7 0×00000000004016c0 in start_thread (arg=<optimized out>)

at pthread_create.c:304

#8 0×0000000000432589 in clone ()

#9 0×0000000000000000 in ?? ()

Thread 4 (Thread 0x7f2665b78700 (LWP 16999)):

#0 __lll_lock_wait_private ()

at ../nptl/sysdeps/unix/sysv/linux/x86_64/lowlevellock.S:97

#1 0×0000000000418836 in _L_lock_9558 ()

#2 0×0000000000416c1c in free ()

#3 0×0000000000400586 in proc ()

#4 0×00000000004005c7 in bar_one ()

#5 0×00000000004005d7 in foo_one ()

#6 0×00000000004005ef in thread_one ()

#7 0×00000000004016c0 in start_thread (arg=<optimized out>)

at pthread_create.c:304

#8 0×0000000000432589 in clone ()

#9 0×0000000000000000 in ?? ()

Thread 3 (Thread 0x1ab1860 (LWP 16998)):

#0 0x000000000042fed1 in nanosleep ()

#1 0x000000000042fda0 in sleep ()

#2 0x000000000040078a in main ()

Thread 2 (Thread 0x7f2663b74700 (LWP 17003)):

#0 __lll_lock_wait_private ()

at ../nptl/sysdeps/unix/sysv/linux/x86_64/lowlevellock.S:97

#1 0x0000000000418836 in _L_lock_9558 ()

#2 0x0000000000416c1c in free ()

#3 0x0000000000400586 in proc ()

#4 0x00000000004006bb in bar_five ()

#5 0x00000000004006cb in foo_five ()

#6 0x00000000004006e3 in thread_five ()

#7 0x00000000004016c0 in start_thread (arg=<optimized out>)

at pthread_create.c:304

#8 0x0000000000432589 in clone ()

#9 0x0000000000000000 in ?? ()

Thread 1 (Thread 0x7f2664375700 (LWP 17002)):

#0 0x000000000043ef65 in raise ()

#1 0x0000000000409fc0 in abort ()

#2 0x000000000040bf5b in __libc_message ()

#3 0x0000000000412042 in malloc_printerr ()

#4 0x0000000000416c27 in free ()

#5 0x0000000000400586 in proc ()

#6 0x000000000040067e in bar_four ()

#7 0x000000000040068e in foo_four ()

#8 0x00000000004006a6 in thread_four ()

#9 0x00000000004016c0 in start_thread (arg=<optimized out>)

at pthread_create.c:304

#10 0x0000000000432589 in clone ()

#11 0x0000000000000000 in ?? ()

From threads #4 and #5 we can identify one such a region with a shared buffer 0×6b8fc0 which may further point to heap entries.

(gdb) disassemble proc

Dump of assembler code for function proc:

0x00000000004004f0 <+0>: push %rbp

0x00000000004004f1 <+1>: mov %rsp,%rbp

0x00000000004004f4 <+4>: push %rbx

0x00000000004004f5 <+5>: sub $0x18,%rsp

0x00000000004004f9 <+9>: callq 0x40ac70 <rand>

0x00000000004004fe <+14>: mov %eax,%ecx

0x0000000000400500 <+16>: mov $0x68db8bad,%edx

0x0000000000400505 <+21>: mov %ecx,%eax

0x0000000000400507 <+23>: imul %edx

0x0000000000400509 <+25>: sar $0xc,%edx

0x000000000040050c <+28>: mov %ecx,%eax

0x000000000040050e <+30>: sar $0x1f,%eax

0x0000000000400511 <+33>: mov %edx,%ebx

0x0000000000400513 <+35>: sub %eax,%ebx

0x0000000000400515 <+37>: mov %ebx,%eax

0x0000000000400517 <+39>: mov %eax,-0x14(%rbp)

0x000000000040051a <+42>: mov -0x14(%rbp),%eax

0x000000000040051d <+45>: imul $0x2710,%eax,%eax

0x0000000000400523 <+51>: mov %ecx,%edx

0x0000000000400525 <+53>: sub %eax,%edx

0x0000000000400527 <+55>: mov %edx,%eax

0x0000000000400529 <+57>: mov %eax,-0x14(%rbp)

0x000000000040052c <+60>: callq 0x40ac70 <rand>

0x0000000000400531 <+65>: mov %eax,%ecx

0x0000000000400533 <+67>: mov $0x68db8bad,%edx

0x0000000000400538 <+72>: mov %ecx,%eax

0x000000000040053a <+74>: imul %edx

0x000000000040053c <+76>: sar $0xc,%edx

0x000000000040053f <+79>: mov %ecx,%eax

0x0000000000400541 <+81>: sar $0x1f,%eax

0x0000000000400544 <+84>: mov %edx,%ebx

0x0000000000400546 <+86>: sub %eax,%ebx

0x0000000000400548 <+88>: mov %ebx,%eax

0x000000000040054a <+90>: mov %eax,-0x18(%rbp)

0x000000000040054d <+93>: mov -0x18(%rbp),%eax

0x0000000000400550 <+96>: imul $0x2710,%eax,%eax

0x0000000000400556 <+102>: mov %ecx,%edx

0x0000000000400558 <+104>: sub %eax,%edx

0x000000000040055a <+106>: mov %edx,%eax

0x000000000040055c <+108>: mov %eax,-0x18(%rbp)

0x000000000040055f <+111>: mov -0x14(%rbp),%eax

0x0000000000400562 <+114>: cltq

0x0000000000400564 <+116>: mov 0x6b8fc0(,%rax,8),%rax

0x000000000040056c <+124>: test %rax,%rax

0x000000000040056f <+127>: je 0x400597 <proc+167>

0x0000000000400571 <+129>: mov -0x14(%rbp),%eax

0x0000000000400574 <+132>: cltq

0x0000000000400576 <+134>: mov 0x6b8fc0(,%rax,8),%rax

0x000000000040057e <+142>: mov %rax,%rdi

0x0000000000400581 <+145>: callq 0x416bc0 <free>

0×0000000000400586 <+150>: mov -0×14(%rbp),%eax

0×0000000000400589 <+153>: cltq

0×000000000040058b <+155>: movq $0×0,0×6b8fc0(,%rax,8)

0×0000000000400597 <+167>: mov -0×18(%rbp),%eax

0×000000000040059a <+170>: cltq

0×000000000040059c <+172>: mov %rax,%rdi

0×000000000040059f <+175>: callq 0×416c90 <malloc>

0×00000000004005a4 <+180>: mov %rax,%rdx

0×00000000004005a7 <+183>: mov -0×14(%rbp),%eax

0×00000000004005aa <+186>: cltq

0×00000000004005ac <+188>: mov %rdx,0×6b8fc0(,%rax,8)

0×00000000004005b4 <+196>: jmpq 0×4004f9 <proc+9>

End of assembler dump.

- Dmitry Vostokov @ DumpAnalysis.org + TraceAnalysis.org -